MiniMax Put Out a Free CLI Agent and Nobody Knows What to Do With It

A developer on Reddit's r/ClaudeCode posted a guide showing how to get Qwen3.5, GLM-5, Kimi K2.5, and MiniMax M2.5 all under one API key for three dollars a month. The post hit 179 upvotes. The comments filled up fast. The thread title said it all: Alibaba's $3/month Coding Plan gives you four models in Claude Code.

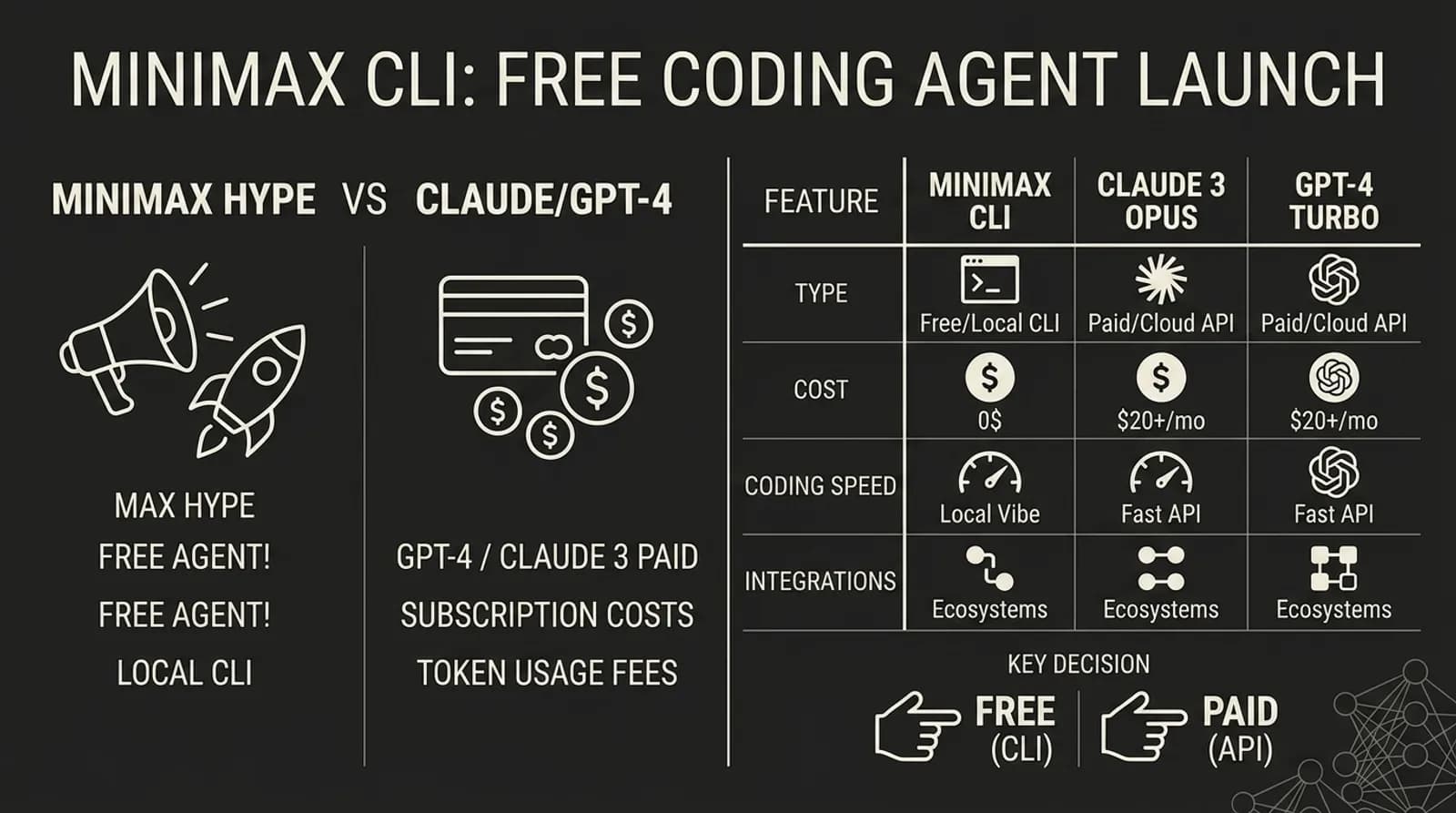

That post was not really about Alibaba. It was about something bigger. People are tired of paying $20 to $200 a month for AI coding tools that hit usage caps. And Chinese AI companies like MiniMax are noticing.

The Free Agent That Wants Your Attention

MiniMax dropped their Mini-Agent CLI tool in late 2025. It is a terminal-based coding agent. You install it with one command. You give it an API key from platform.minimax.io. And then it starts writing code, running scripts, editing files. All in your terminal.

The pitch was hard to ignore. No usage caps. No "session limit reached, resets at noon" messages. No $200 monthly bill from Anthropic.

Mike Slinn, a developer who wrote a detailed 42-minute review, put it bluntly: "MiniMax-M2 is not nearly as powerful a debugger as Claude." He described watching Mini-Agent struggle for four hours on a bug it created. Then he gave the same bug to Claude. Fifteen minutes later, done. Another time MiniMax burned through its entire 100-step quota and failed. Claude cleaned up the mess in 15 seconds.

But here is the thing Slinn also said: "If you have a lot of simple, repetitive work, Mini-Agent represents incredible value."

That split opinion shows up everywhere you look.

What People Are Actually Saying

The reactions fall into three camps, and none of them are boring.

Camp one says it works if you are patient. A user on Hacker News described using VS Code plus Cline plus OpenRouter plus MiniMax M2.7. They said it was better than Gemini 3 Flash but got expensive as context filled up because MiniMax did not support prompt caching on OpenRouter. The average task needed three to six revisions. Context filled up often. But for the price, they kept using it.

Another HN commenter said MiniMax M2.5 was "usable" but they still preferred the older grok-code-fast-1 model. Not a glowing endorsement. Not a dismissal either. Someone else on HN noted that MiniMax had "issues with heavy thinking models and client implementations with poor tool usage" when paired with Cline. The tool usage matters more than people think. A model that can code but cannot use a linter or run tests properly is like a carpenter who can measure but cannot hold a saw.

The pattern is clear. MiniMax works. But you need to babysit it more than Claude or GPT. You need to know when to step in and when to let it run.

Camp two says it finally got good. When MiniMax M2.7 dropped in April 2026, the tone shifted. A post on r/openclaw from a longtime Opus and Sonnet user described running benchmarks comparing M2.7 against GPT 5.4 and Gemini 3.1 Pro. MiniMax came out on top. Fastest to deliver a working result. They then tested multi-step tool chains that make most models fail. M2.7 passed. After five hours of active use, they said they had not missed Sonnet or Opus once.

The M2.7 announcement claimed 56.22% on SWE-Pro and 57.0% on Terminal Bench 2. It also said the model "deeply participated in its own evolution." Whatever that means.

Camp three says forget the model, look at the price. The GithubCopilot subreddit is not usually a place for measured analysis. But a viral post there summed up the frustration: "The Chinese models are now getting good, and catching up. Once they have reached a level of reliability, then no one will ever go back to western models." The post named GLM 5.1, MiniMax M2.7, and Kimi as the three reasons western providers should be worried.

That post got ratioed. But the sentiment is real. Pricing is the story.

The Weird Thing About Chinese AI Tools

There is something nobody talks about with these tools. The documentation is rough. Mike Slinn pointed out that MiniMax's registration requires a password with only alphanumeric characters and the error message is badly phrased in English. The Mini-Agent version stayed at 0.1.0 across multiple updates. The default system prompt was badly configured. Important features were not enabled out of the box.

This is not unique to MiniMax. Every Chinese AI tool i have tried has this same problem. The model can be strong but the wrapper around it feels like an afterthought. Claude Code feels polished. Mini-Agent feels like a grad student built it over a weekend and nobody did QA.

i spent an hour once trying to figure out why a Chinese AI tool kept failing silently. Turns out the error was in a log file written in Mandarin. No English fallback. No hint in the terminal output. Just quiet failure. i laughed. Then i closed the tab and went back to Claude.

And yet. The models keep getting better. The price stays low. The usage caps do not exist. Someone on r/LocalLLaMA asked if we are entering "China's Agent War Era" when both GLM 5.0 and MiniMax 2.5 dropped the same week. The framing was dramatic but the point stood. The competition shifted from "who writes better answers" to "who can actually finish the job."

It reminds me of early Xiaomi phones. Terrible software. Great hardware. Half the price. Everyone laughed. Then they did not.

Who Should Actually Use This

If you are a professional developer working on a serious codebase with tight deadlines, MiniMax is not replacing Claude or GPT. Not yet. The debugging gap is real. The documentation gap is real. When something breaks in production at 2am, you want the tool that fixes it in 15 seconds, not the one that burns through 100 steps and fails.

But if you are a solo developer, a student, someone prototyping ideas, or just someone who does not want to pay Anthropic $100 a month for coding help, MiniMax is worth trying. The M2.7 model genuinely closed the gap. The price makes the rough edges forgivable.

There is also a growing group of people using MiniMax through third-party routers like OpenRouter or Alibaba's Model Studio. This is where it gets interesting. You do not have to commit to one model. You bounce between MiniMax, GLM, Qwen, and Kimi depending on the task. Some are better at reasoning. Some are faster. Some are cheaper. You pick per task.

A user on Reddit's r/ClaudeCode described this setup perfectly. One subscription, four models, switch freely. The tool they built to manage this is called Clother. Three bucks for the first month. Eighteen thousand requests. That is not a typo.

The Alibaba bundle that includes MiniMax alongside Qwen, GLM, and Kimi for $3 a month is hard to argue with. You get four models to experiment with. One of them will probably work for your use case.

And that might be the real story. Not that MiniMax is the best coding agent. But that the floor for what counts as "good enough" just dropped to three dollars a month.

i keep thinking about that Reddit thread. 179 upvotes. 122 comments. People sharing config files and benchmark results like they found a cheat code. They did. It is just that the cheat code still crashes sometimes.