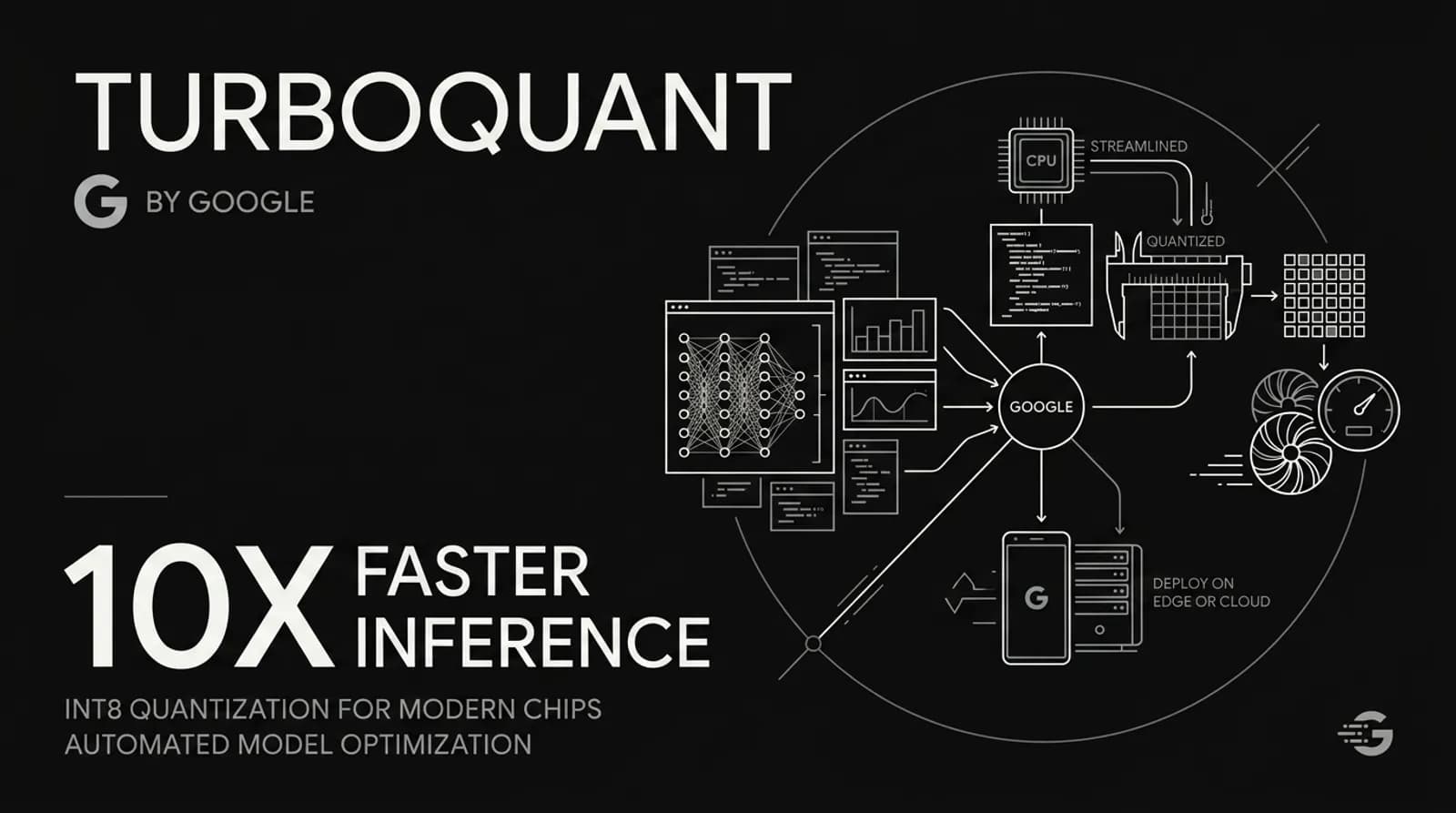

Google's TurboQuant: What It Is and Why It Actually Matters

The numbers are absurd. For one user running a single Llama-3.1-8B model at 128,000 tokens of context, the KV cache alone chews up 16 gigabytes of VRAM. On a GPU that might have 24GB total. That leaves almost nothing for the actual model weights.

This is not a hypothetical problem. This is what running long documents through an AI model looks like right now. Every token the model processes gets stored as a high-dimensional vector. Every single one. The cache grows linearly with context length and the GPU memory runs out before the interesting part of the conversation even starts.

Google Research published TurboQuant on March 24, 2026. It is a compression algorithm that shrinks the KV cache down to 3 bits per value with zero accuracy loss. That 16GB becomes roughly 2.6GB. The GPU breathes again.

The internet called it Pied Piper within hours.

How the KV Cache Bottleneck Works

Here is the simplest way to think about it. Every word an AI model processes gets encoded into a vector. The model stores these vectors so it does not have to recompute them every time it generates the next word. This storage is the KV cache. "K" for key, "V" for value.

The problem is that each of these vectors is stored in high precision. For a model like Llama-3.1-8B with 32 layers and 8 KV heads, running at 128K context, you are storing millions of these vectors. Each one in full floating-point precision. It adds up fast.

Long-context tasks are the main victim. Asking a model to read a 200-page legal contract, a codebase, or a research paper means the context window stretches way past where the KV cache can handle it gracefully. The model either runs out of memory or slows to a crawl.

Why Standard Quantization Hits a Wall

i used to think quantization was just about shrinking numbers. Compress everything, save space, done.

The reality is messier. When you compress vectors, you lose some information. The gap between what you stored and what the original looked like is called quantization error. For KV cache, this error accumulates as the context grows. By the time you reach the end of a long document, the model is working with garbage.

here is a question people always ask. Why not just store less precision? The problem is that most quantization approaches need to store "quantization constants" alongside the compressed values. These are meta-data that tells the model how to decompress. Sometimes this overhead adds 1-2 extra bits per number. You are compressing but also carrying extra baggage. The net savings shrink.

most tutorials tell you quantization saves memory. They do not mention the overhead tax that eats the gains.

How TurboQuant Actually Solves This

The first time i read the TurboQuant paper, the PolarQuant insight sounded almost too simple to work. Rotate the data randomly. After rotation, the angles of the vectors become highly predictable. They cluster in a known pattern.

Think of it like this. You are describing a location. Option one: "go 3 blocks east, 4 blocks north." Option two: "go 5 blocks total at a 37-degree angle." Option two carries less information because the pattern of angles is known ahead of time. No need to store the full coordinate system for every single vector.

Because the geometry of the data is known and concentrated, TurboQuant does not need to store expensive normalization constants for every block. It maps data onto a fixed, predictable grid.

The second stage handles the leftover error. Even PolarQuant leaves a small residual. TurboQuant applies a 1-bit Quantized Johnson-Lindenstrauss transform to that residual. Each error number becomes a single sign bit, either +1 or -1. This acts as a mathematical error-checker. It removes the bias that would otherwise accumulate and cause the model to drift off track.

The result is 3-bit compression with no accuracy loss. No fine-tuning required. No training. Just math.

Sixteen gigabytes becomes two and a half. That is the whole story in one sentence.

On NVIDIA H100 GPUs, 4-bit TurboQuant achieved 8x speedup in attention computation. Memory chip stocks dipped on news of the release. Cloudflare's CEO called it Google's DeepSeek moment.

The Hype, The Reality, and the Joke

The community moved fast. Within 24 hours of the paper dropping, developers were porting TurboQuant to llama.cpp, MLX for Apple Silicon, vLLM, and SGLang. One developer got it running on Apple Silicon with Metal GPU support. Qwen-3.5-35B-A3B, 4.9x compression, 98.7-99.5% quality retention through 32K context. The numbers matched Google's benchmarks closely. The algorithm works outside the lab.

The Needle-in-a-Haystack test asks whether an AI can find a single specific sentence buried inside 100,000 words. TurboQuant passed it. Perfect recall scores while reducing the KV cache footprint by 6x. This "quality neutrality" is rare. At 3-bit compression, most systems suffer serious logic degradation. TurboQuant does not.

One researcher on Hacker News pointed out that the core technique of rotation before extreme quantization was introduced in a 2021 NeurIPS paper called DRIVE. Google acknowledged the prior work but the researcher felt the credit did not go far enough. The discussion got heated fast. This is the kind of thing that happens in fast-moving research. Multiple teams arrive at similar ideas independently.

The Pied Piper comparison was instant. HBO's Silicon Valley ran from 2014 to 2019 and its fictional startup built a compression algorithm that changed everything. Google's TurboQuant is also about extreme compression without quality loss, applied to AI working memory. The joke is earned.

But Pied Piper was going to compress anything and everything. TurboQuant solves one specific bottleneck in transformer models. That is how real breakthroughs work, not the magic-all-in-one-algorithm kind.

Who Actually Needs This

If you are running AI in a commercial setting, this changes your infrastructure planning. Inference costs come down, longer context windows become affordable, and single-GPU deployments get more capable.

If you are a developer working with open-source models on consumer hardware, you benefit too. Fitting a 35B parameter model at long context into Apple Silicon or a single mid-range GPU was not realistic before. Now it is getting close.

If you are just using a chatbot for short conversations, this does not change much for you. Shorter tasks do not hit the KV cache bottleneck hard enough to feel the difference.

Most people will feel this indirectly through cheaper and faster AI services over the next year or two. The people running into the memory wall right now feel it immediately.

And the other thing worth knowing is that this is not a fix for training. TurboQuant targets inference memory, not the training process. Training still needs massive RAM. If you thought this would eliminate GPU memory shortages broadly, that is not what this does.

The Math Is Harder Than the Results

i spent thirty minutes reading a blog post where someone spent 31 hours breaking down the mathematics behind TurboQuant so readers would not have to. That is honest. The paper is dense. The two-stage compression, the polar coordinate transformation, the Johnson-Lindenstrauss lemma. It is not light reading.

But the results are clean enough to trust, and the community validation came faster than the paper's authors probably expected. Multiple independent implementations have validated the core claims. The compression ratios hold. The accuracy loss is genuinely minimal. The speedups on H100 hardware are real.

This is what good research looks like. Not a flashy demo that falls apart in production, but a solid mathematical foundation with reproducible results that the community can build on within weeks.

The next time you run a model on a long document and it does not crash your GPU, you might have Google Research to thank. Or maybe the developer who spent six hours figuring out why Metal was silently routing everything to the CPU. Probably both.